Hi there,

A warm welcome to the 378 new subscribers who joined since the last newsletter! It's time for your monthly update on GA4 and BigQuery.

New on GA4BigQuery

Since the last newsletter I've added an article that delves into an array of web analytics tools, focusing on their ability to export raw data to BigQuery. This list is not comprehensive, and additional vendors will be added soon:

- Which web analytics tools provide a raw event data export to BigQuery?

BigQuery breaking down multi-cloud barriers

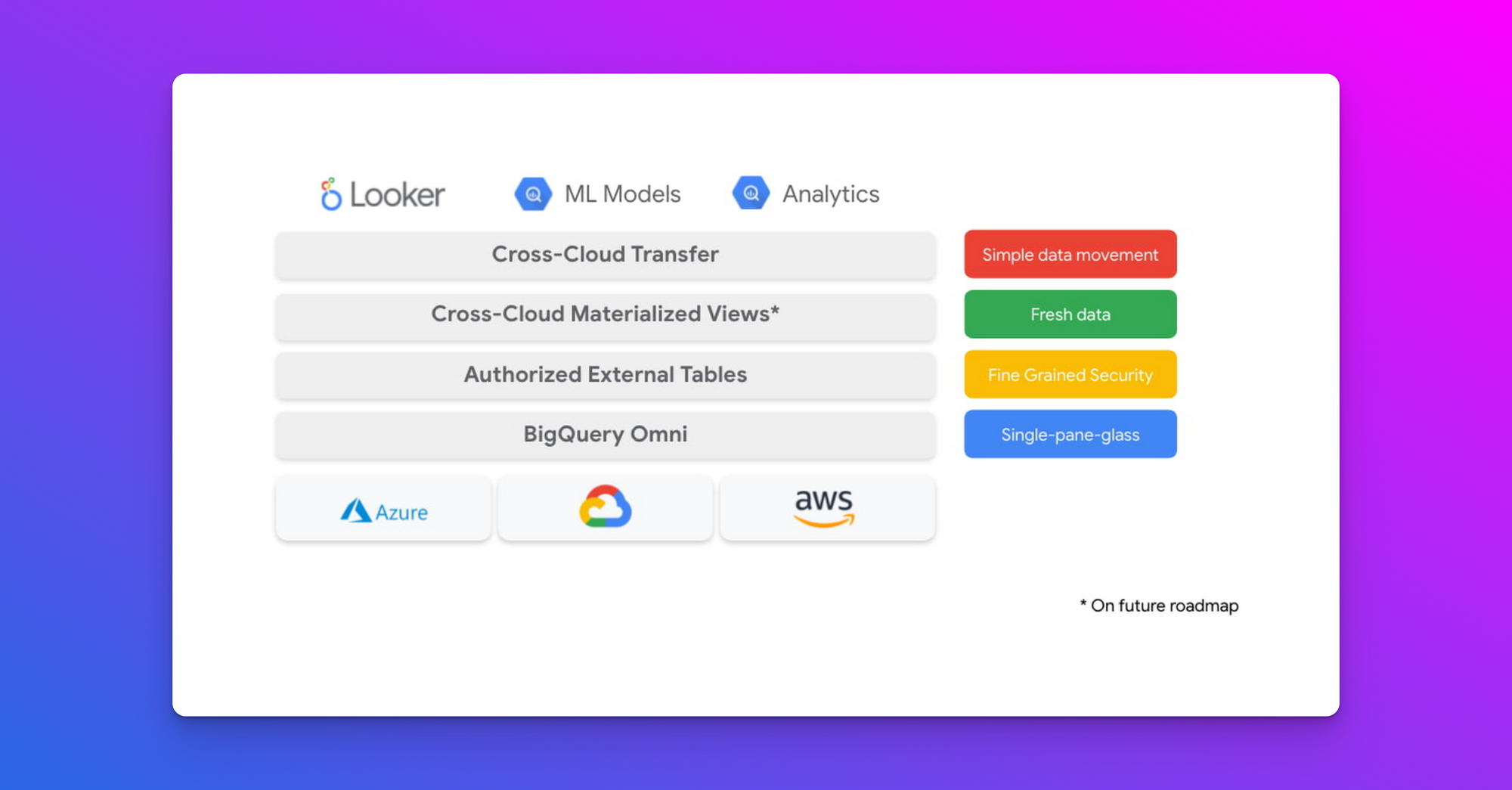

In today's rapidly evolving digital landscape, organizations are increasingly storing and processing data across multiple cloud platforms. This multi-cloud approach, while offering flexibility, often leads to data silos and complexity in analytics. BigQuery's latest released feature, cross-cloud materialized views, emerges as a possible game-changer in this scenario.

Cross-cloud materialized views allow customers to very easily create a summary materialized view on GCP from base data assets available on another cloud. Cross-cloud MVs are automatically and incrementally maintained as base tables change, meaning only a minimal data transfer is necessary to keep the materialized view on GCP in sync. (source)

Cross-cloud materialized views are powered by BigQuery Omni. Omni is designed to break down the barriers of multi-cloud data analytics, allowing users to run BigQuery's powerful query engine on data that resides in other cloud services like Amazon S3 or Azure Blob Storage without moving it to Google Cloud.

This feature not only streamlines data workflows but significantly cuts down costs and technical hurdles associated with transferring large datasets between cloud providers. Here's a demo of the new feature and some documentation.

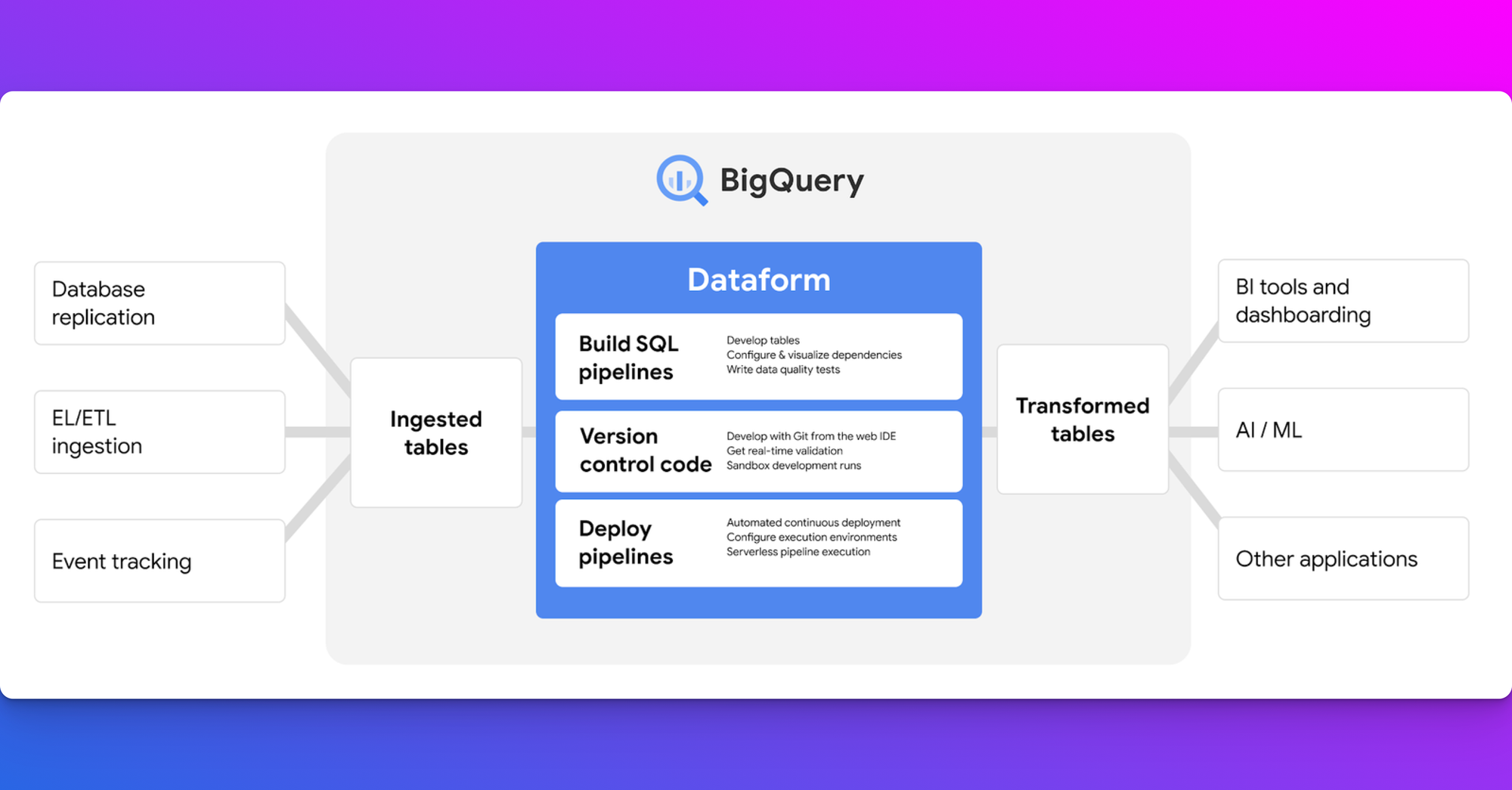

Dataform gaining increased spotlight in digital analytics

In the recent newsletters, there has been a significant number of articles from the digital analytics community discussing Dataform. For those unfamiliar with this tool, Dataform was established as a competitor to dbt and was acquired by Google in 2020.

Currently, Dataform is fully integrated into Google Cloud, allowing data professionals to create and manage SQL workflows in BigQuery. This transforms raw data into clean datasets essential for analytics and reporting.

There are several notable resources from the digital analytics community concerning Dataform:

- Artem Korneev has developed a Dataform package designed to prepare session and event tables using GA4 export data in BigQuery. This package includes comprehensive documentation and offers multiple helper functions for session attribution, channel grouping, and more.

- Rick Dronkers and Krisztián Korpa have created an video 'crash course' on Dataform, providing an excellent introduction to the tool. They also offer a course covering BigQuery, server-side GTM, and Firestore.

- Yours truly is also in the process of creating a tutorial series on Dataform. These tutorials will be included in the GA4BigQuery premium subscription, meaning existing premium subscribers can access them at no additional cost. My series will be focused on demonstrating how to create and maintain a Dataform-powered GA4 export SQL workflow in BigQuery, and it is designed to be accessible even for those without data engineering experience.

BigQuery Export Service Level Agreement (SLA) added

Google added documentation for the Google Analytics 360 Service Level Agreement (SLA), more specifically about the BigQuery export. It focuses on the guaranteed arrival time of BigQuery export daily data for premium properties, which are classified as 'Normal' or 'Large' based on event collection volume.To be eligible for this SLA, users must choose the 'Fresh daily' option for their BigQuery export GA4 configuration. However, this option isn't available for the largest category of properties, termed 'XLarge'.

There's a possibility that the data arrives within the SLA timeframe, but without Google Ads attribution data. In such cases, fields like traffic_source.source, traffic_source.medium, and traffic_source.name will show Data Not Available. Users can then use alternative methods such as the Google Ads API, Google Ads Scripts, or the BigQuery Data Transfer Service for Google Ads to retrieve data using the Google Click ID (gclid). This SLA feature is currently in beta, with plans to 'apply it in the future'.

Relevant blog posts and resources from the community

- GA4 - The CDP You Didn't Know You Had

- Everyone is a CDP now

- Join Google Ads and GA4 data in Google BigQuery

- A Comprehensive Guide to Error Handling and Alerting in Dataform

- GA4 Query Optimization Strategies with Dataform

- What do I mean by streaming sGTM into BigQuery?

- How Does BigQuery Data Import for Google Analytics 4 Differ from Universal Analytics?

- BigQuery + GA4: How To Get the First or Most Recent Event for a User

- GA4 and HyperLogLog: the good, the bad and the ugly

- Using BigQuery and GA4: Thoughts on Audience Segmentation (Part 2) Solutions

- When Will Yesterday’s GA4 Data Be Ready? BigQuery Says Bye Bye to Lag

- More Ways to Create Incremental Tables in Dataform

- How to Setup a GCP Dataform Project from Scratch + Connect to GA4

- Easily Modify Data in BigQuery Tables w/ Google Sheets

- Unlock the power of your marketing data with Snowflake connector for Google Analytics 4

That's it for now. Thanks for reading and happy querying!

Best regards,

Johan