How to create and maintain a Dataform powered GA4 export SQL workflow in BigQuery

Dataform is revolutionizing the way data professionals work with BigQuery, especially when it comes to managing GA4 export data. Think of it as a tool that seamlessly blends with BigQuery to transform the raw GA4 event data into a well-defined and actionable format.

If you are leveraging your GA4 export data for a while now, chances are that you have a couple of scheduled queries running, producing multiple tables that power reports for your organization.

This approach can make it challenging to manage complex data dependencies and transformations, potentially resulting in inefficient and error-prone workflows. Especially when you join multiple data sources, like your CRM, transactions, web analytics, advertisment and social platforms.

Time to step up your game and learn how to use Dataform. The best part: it is already fully integrated in Google Cloud.

What is Dataform and why should you start using it

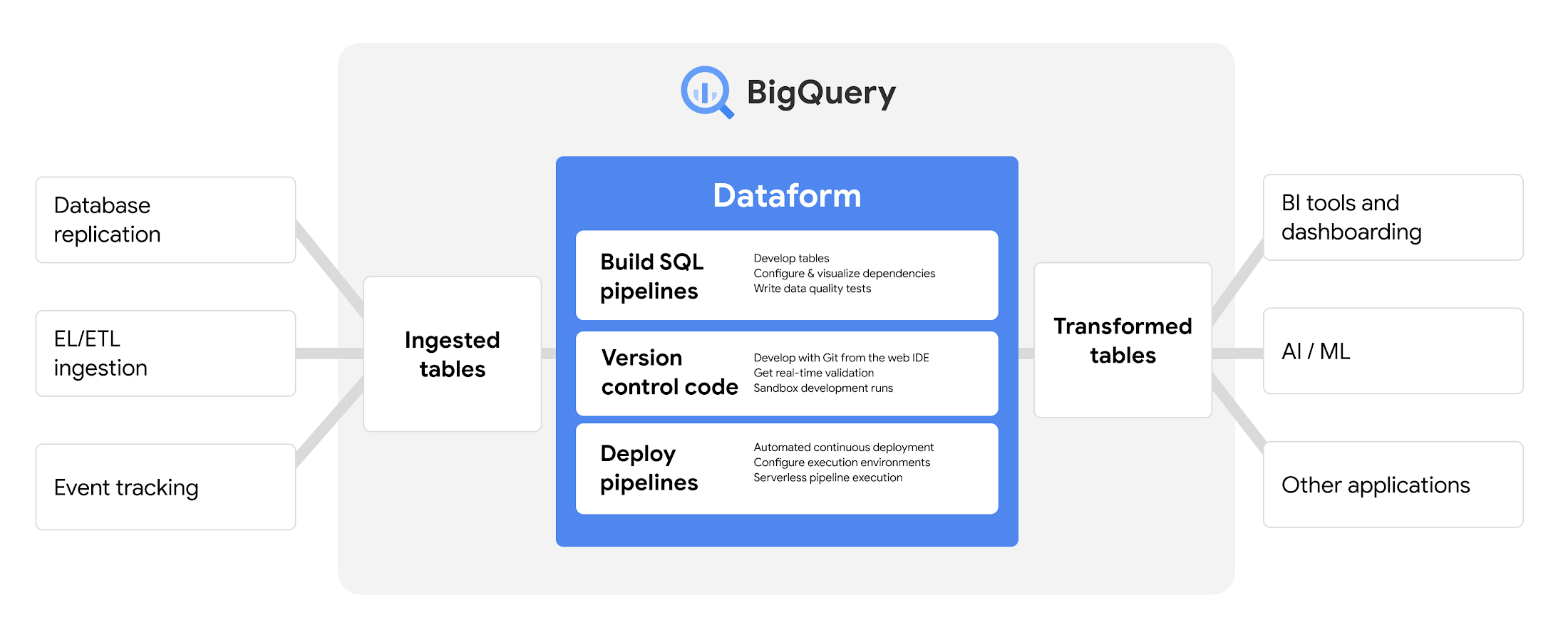

Dataform is a tool like dbt, you can build and deploy pipelines to process and analyze (GA4 export) data, ensuring data accuracy and accessibility without the need for extensive technical knowledge or infrastructure management.

(1) Raw data is extracted from source systems and (2) loaded into the data warehouse, then (3) transformed within the data warehouse.

You use Dataform to manage that last part: data transformation in your data warehouse.

Dataform simplifies the transformation of (GA4 export) data in BigQuery, enabling data professionals to:

- Develop and execute SQL workflows: perfect for transforming raw GA4 export data into insightful tables

- Collaborative workflow development: teams can co-develop SQL workflows, leveraging git for version control

- Efficient data management: handle numerous tables and/or views and their complex dependencies with ease

- Reduce costs: apply cost reducing practices like incremental loading and partitioning

- Enhanced visualization: view a clear dependency tree (lineage graph) of your SQL workflow

- Reusable code: utilize JavaScript to reduce redundancy in your workflows (functions, variables)

- Quality assurance: run tests on data sources and outputs, ensuring the reliability of your data outputs

For all these reasons, we believe it is essential for data professionals to learn how to automate their data workflow. As Dataform is already embedded in the Google Cloud ecosystem, the service comes at no extra costs.

Of course you will be billed for querying and storage, just like in any BigQuery environment, but this depends on various factors. Dataform itself helps in managing and optimizing SQL queries and workflows. Efficient query management can actually reduce costs by streamlining processes.

Learning to use Dataform is a smart move for data professionals as it enhances their skill set in managing complex SQL workflows, an essential aspect of data engineering and analytics.