How to query streaming GA4 export data and combine it with historical data in BigQuery

Businesses are finding it important to analyse data in near real-time in order to make better decisions. One way to do this is by using BigQuery streaming export to combine GA4 intraday tables with daily tables, which allows for up-to-date insights.

Analysing data in near real-time is becoming increasingly important for businesses to make the best decisions for their organisation. To get the most up-to-date insights, combining intraday tables with daily tables in Google Analytics export data in BigQuery can be a practical use case.

BigQuery streaming export (if configured in the linking process) makes data for the current day available within a few minutes.

For each day, streaming export creates one new table:events_intraday_YYYYMMDD. An internal staging table that includes records of session activity that took place during the day. Streaming export is a best-effort operation and may not include all data for reasons such as the processing of late events and/or failed uploads. Data is exported continuously throughout the day. This table can include records of a session when that session spans multiple export operations. This table is deleted whenevents_YYYYMMDDis complete. (source)

Limitations

Good to be aware of: the streaming export does not include user-attribution data for new users, such traffic_source.medium. For existing users, their user-attribution data is included but it takes approximately 24 hours to be fully processed. Therefore, it is recommended to not rely on the data from the streaming export and instead obtain user-attribution data from the full daily export.

Example query (intraday only)

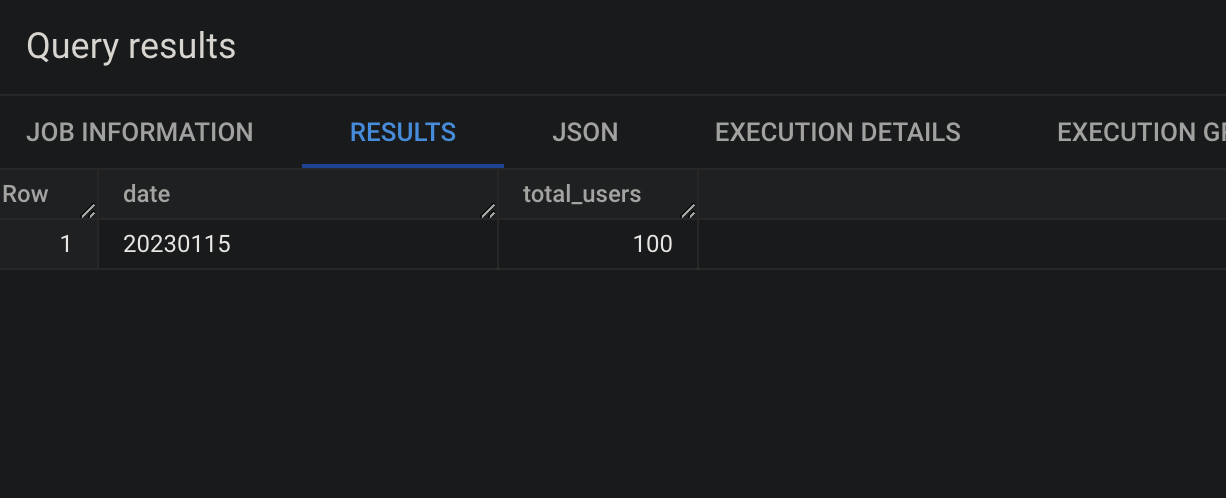

Querying an intraday table is fairly simple. In this case we query the table for Jan 15th, 2023.

select

event_date as date,

count(distinct user_pseudo_id) as total_users

from

`ga4bigquery.analytics_250794857.events_intraday_20230115`

group by

date

Example query (combined)

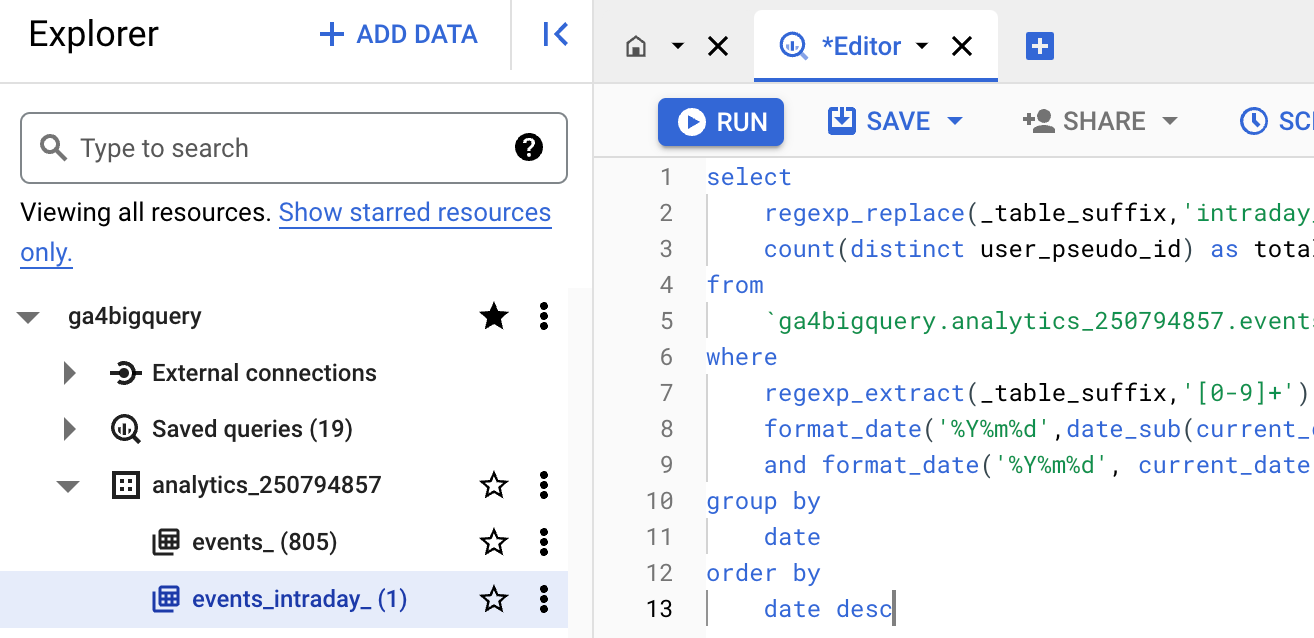

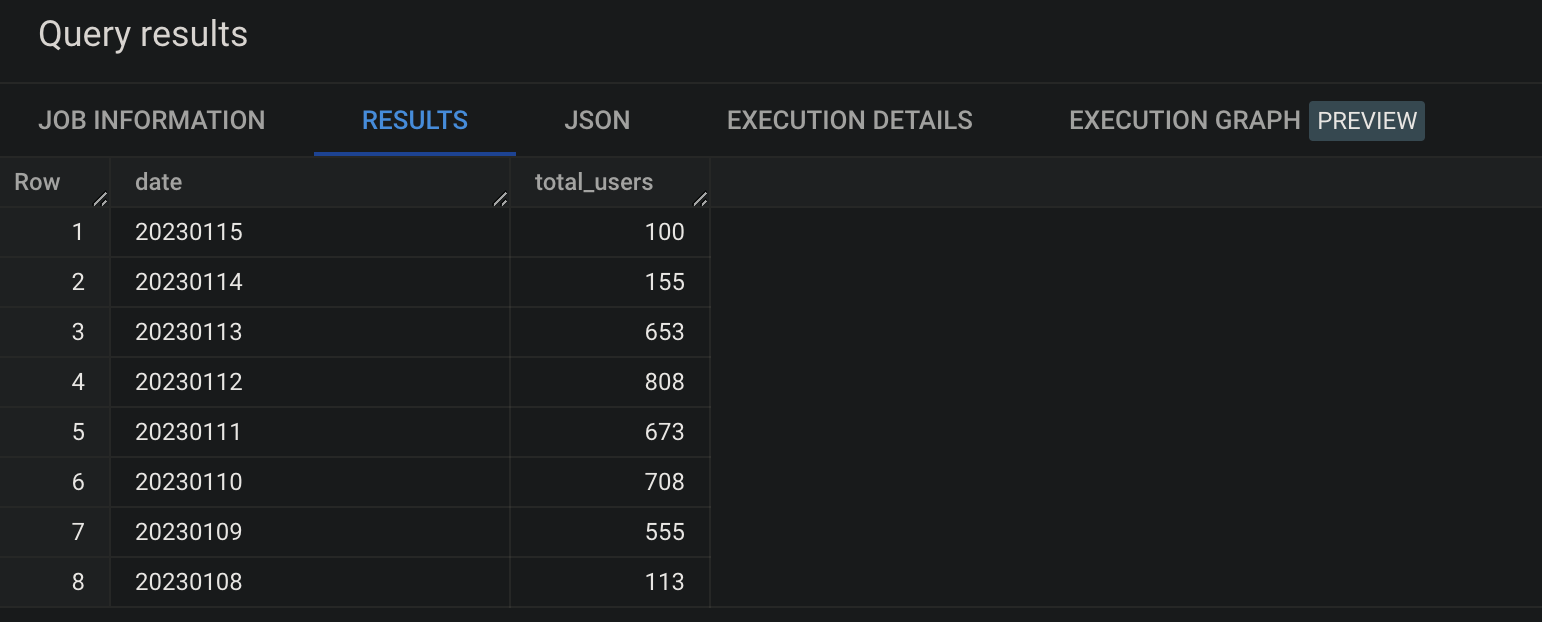

The easiest way to include intraday data in your query as well as historical data is use the wildcard in combination with a wider _table_suffix filter. With this condition it will include any table in the data set that starts with events_ and contains a date with the format YYYYMMDD.

In the following example the current_date() is 2023-01-15. The regexp_extract(_table_suffix,'[0-9]+') in the where clause makes sure we can query the dates we specified in the dynamic date range.

If we don't apply a regexp_replace for the first column then the date value for row 1 would be intraday_20230115 instead of the format of the other dates, e.g. 20230114. This is because the _table_suffix returns all characters after events_.

select

regexp_replace(_table_suffix,'intraday_','') as date,

count(distinct user_pseudo_id) as total_users

from

`ga4bigquery.analytics_250794857.events_*`

where

regexp_extract(_table_suffix,'[0-9]+') between

format_date('%Y%m%d',date_sub(current_date(), interval 7 day))

and format_date('%Y%m%d', current_date())

group by

date

order by

date desc

Now it's your turn!

I hope you've enjoyed this and feel a bit more confident to utilise your own Google Analytics data in BigQuery. Drop a line in the comments if you have any questions, feedback or suggestions related to this article.